We don't care what the maximum of the likelihood function is. The maximum of $f(x)$ is the same as the maximum of $log(f(x))$, so we prefer the function with the easier derivative.

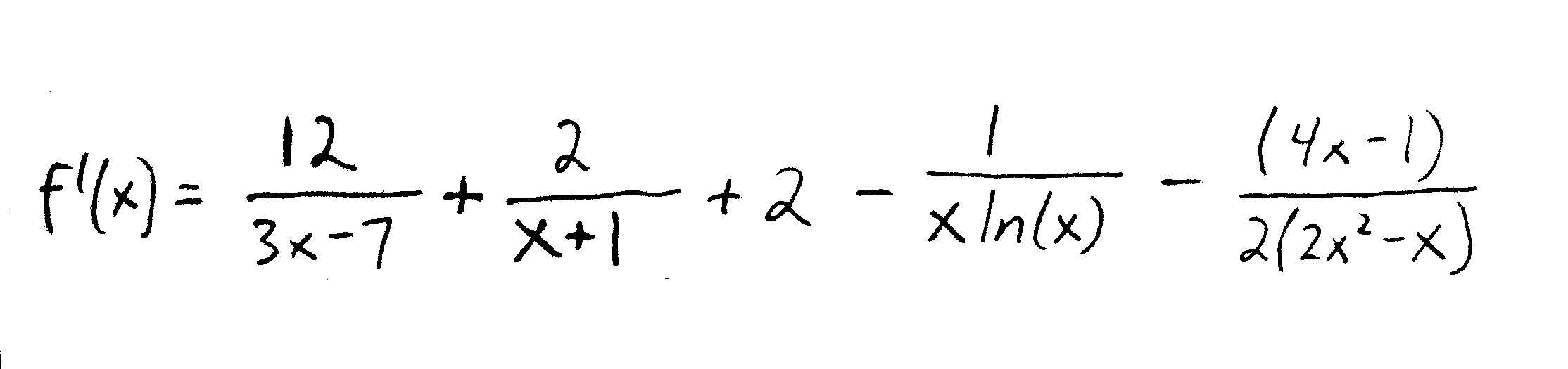

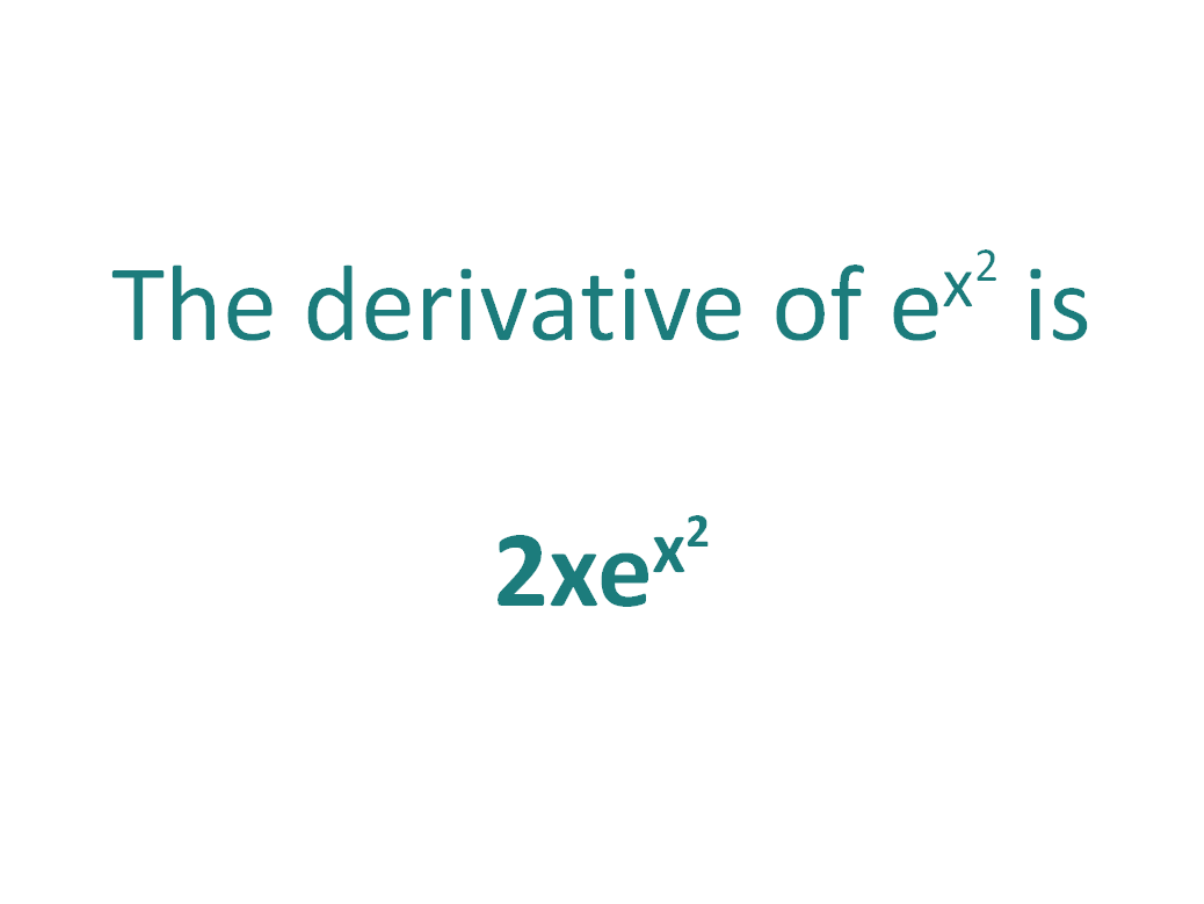

Taking the logarithm turns the multiplication into addition: $log(xy) = log(x) + log(x)$. As the value of n gets larger, the value of the sigmoid function gets closer and closer to 1 and as n gets smaller, the value of the sigmoid function is get closer and closer to 0. The following are equivalent: d/(dx)logex1/x If y ln x, then (dy)/(dx)1/x We now show where the formula for the derivative of loge x comes from, using first principles. That's a very nasty product rule derivative. Looking at the graph, we can see that the given a number n, the sigmoid function would map that number between 0 and 1. The derivative of the logarithmic function y ln x is given by: d/(dx)(ln\ x)1/x You will see it written in a few other ways as well. The Derivative of Cost Function: Since the hypothesis function for logistic regression is sigmoid in nature hence, The First important step is finding the gradient of the sigmoid function. For example log base 10 of 100 is 2, because 10 to the second power is 100. When we take the logarithm of a number, the answer is the exponent required to raise the base of the logarithm (often 10 or e) to the original number. For $X_1,\dots,X_n$, we multiple $n$ functions. Remember that a logarithm is the inverse of an exponential. The likelihood function need not be so nice that you can get away with tricks like these, however, and you may find yourself in a position where it's necessary to take second derivatives.Ĭoncerning why we take the logarithm, the likelihood function ends up being a big product. If the boundaries are lower than the critical point, you can play some games with the intermediate value theorem to deduce that your critical point is the maximum. So it is necessary to check the boundaries. It need not even be a maximum! The maximum could occur at the boundaries, perhaps $\pm \infty$ on $\mathbb$ or $0$ and $1$ on $$ for a Bernoulli distribution.

This need not be the global maximum of the function. Let's say that you find a unique critical point of the log-likelihood function. The derivative of the logarithmic function y ln x is given by: d/(dx)(ln x)1/x You will see it written in a few other ways as well.